Dear forum members,

I encounter several problems with volumio2. I had an older version running along fine and was so impressed that I ordered a HifiBerry DAC for the

Raspberry B it was running on. I wanted to test the DAC and got a message that said there was a newer version and I upgraded.

It seems I upgraded into a lot of problems.

After a while I decided to start anew and downloaded and installed the latest download for the raspberry. Sometimes the DHCP gave out

a new IP address, sometimes not. If I probed the ip address with a browser, the browser gave an unable to connect message.

The wireless network Volumio sometimes existed, sometimes not. The 192.168.211.1 address dit not work, and

there was no reaction from volumio.local. Several times I just had to pull the power plug off to give it a new try.

In short I am wondering what the heck is going on?

Took the SDHC card out again and with Etcher I burned the image from november 23 2016. Nothing changed.

To check if the raspberry B was OK I started with another image that I use a lot and there were no glitches, errors, malfunctions etc; everything

worked as expected. Even the wifi came up nicely and connected with my home network.

This morning I started the november imaga again and logged in by ssh. It received a valid ip address and I browsed to it.

“Problem loading page. Unable to connect.”

Tried to have a look at the logfiles:

volumio@volumio:/static/var/log$ sudo tail -n50 lastlog

We trust you have received the usual lecture from the local System

Administrator. It usually boils down to these three things:

#1) Respect the privacy of others.

#2) Think before you type.

#3) With great power comes great responsibility.

[sudo] password for volumio:

sudo: unable to mkdir /var/lib/sudo/lectured: No space left on device

sudo: unable to mkdir /var/lib/sudo/ts: No space left on device

volumio@volumio:/static/var/log$ df -h

Filesystem Size Used Avail Use% Mounted on

/dev/mmcblk0p2 2.0G 497M 1.4G 26% /imgpart

/dev/loop0 247M 247M 0 100% /static

overlay 354M 346M 0 100% /

devtmpfs 233M 0 233M 0% /dev

tmpfs 242M 0 242M 0% /dev/shm

tmpfs 242M 8.5M 233M 4% /run

tmpfs 5.0M 4.0K 5.0M 1% /run/lock

tmpfs 242M 0 242M 0% /sys/fs/cgroup

tmpfs 242M 4.0K 242M 1% /tmp

tmpfs 242M 0 242M 0% /var/spool/cups

tmpfs 242M 4.0K 242M 1% /var/log

tmpfs 242M 0 242M 0% /var/spool/cups/tmp

/dev/mmcblk0p1 61M 29M 33M 47% /boot

tmpfs 49M 0 49M 0% /run/user/1000

volumio@volumio

With top I noticed the node process is using into the ninety percent a lot of the time.

Has anyone got some insights into these troubles?

Please advise,

pablo

Please flash a new image, boot the RPi and leave for at least 10 minutes without turning off. There are a number of processes carried out on first boot, which if interupted can lead to an incomplete installation … you were seeing this in your ‘top’ output for ‘node.’

Did as you advised. Used the latest image (may 5). Waited for 20 minutes.

Tried to connect with a firefox browser that answered with problem loading page, unable to connect.

After thirty minutes I tried the CLI:

Nmap scan report for 192.168.1.146

Host is up (0.021s latency).

Not shown: 997 closed ports

PORT STATE SERVICE

22/tcp open ssh

111/tcp open rpcbind

3006/tcp open deslogind

peter@digdesk16:~$ ping -c4 volumio.local

PING volumio.local (192.168.1.146) 56(84) bytes of data.

64 bytes from volumio.fritz.box (192.168.1.146): icmp_seq=1 ttl=64 time=0.637 ms

64 bytes from volumio.fritz.box (192.168.1.146): icmp_seq=2 ttl=64 time=0.524 ms

64 bytes from volumio.fritz.box (192.168.1.146): icmp_seq=3 ttl=64 time=0.537 ms

64 bytes from volumio.fritz.box (192.168.1.146): icmp_seq=4 ttl=64 time=0.652 ms

— volumio.local ping statistics —

4 packets transmitted, 4 received, 0% packet loss, time 3001ms

rtt min/avg/max/mdev = 0.524/0.587/0.652/0.062 ms

:: CLI ::

volumio@volumio:/volumio/app/plugins/system_controller/volumio_command_line_client$ volumio restart

/volumio/app/plugins/system_controller/volumio_command_line_client/volumio.sh: line 128: stop: command not found

Please advise,

pablo

Hi,

I made some test with a fresh install on a rpi b with a wifi dongle (rt8188) no ethernet connection.

Result : I works perfectly, the hotspot is created after about 160sec. Once connected on it I entered my wifi password and the wifi restart and is connected to my network and my library is now scanned. I would say it took less than 6min total.

Ok it not really help you but show that the image is working fine.

@balbuze: Thank you for the confirmation. But nowadays I’m not really sure when people are talking about a RPi type B. I mean the Pi 1 model B from 2012, with 2 usb ports and a lan connector with 512 MB of memory and the short set op GPIO pins.

Thing is I installed a version at the beginning of December 2016. Worked right out of the box! Amazing. And now I cann’t get it to work.

The latest install is now having the same problems in another raspi type B. I have two of them.

I’m now burning a micro SDHC card with the May 5 image for my raspi Zero. Think I will be trying it within the hour.

Greetings,

pablo

Dear forum members,

As stated above I prepared a raspi zero with the latest volumio2 image (May 5), and brought it on. My android smart phone sees a wireless network named volumio. When I try to connect to this network it is authenticating for ages and then comes back with a message it is avoiding poor connection though the phone is hardly a meter away from the raspi zero.

My laptop is not even 2 meters from the raspi zero and also sees the volumio network. It connects with it. But it won’t connect with a page on volumio.local or 192.168.211.1.

pablo@digdesk16:~$ ping -c4 volumio.local

ping: unknown host volumio.local

pablo@digdesk16:~$ ping -c4 192.168.211.1

PING 192.168.211.1 (192.168.211.1) 56(84) bytes of data.

From 192.168.1.111 icmp_seq=1 Destination Host Unreachable

From 192.168.1.111 icmp_seq=2 Destination Host Unreachable

From 192.168.1.111 icmp_seq=3 Destination Host Unreachable

From 192.168.1.111 icmp_seq=4 Destination Host Unreachable

— 192.168.211.1 ping statistics —

4 packets transmitted, 0 received, +4 errors, 100% packet loss, time 2999ms

pipe 4

I really could use some help, please,

pablo

You may want to check this for diagnostic procedure

Tried to follow the diagnostic procedures.

Sorry, but could not find iwconfig.

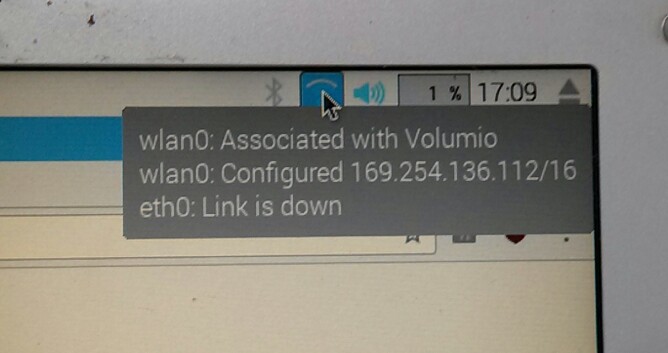

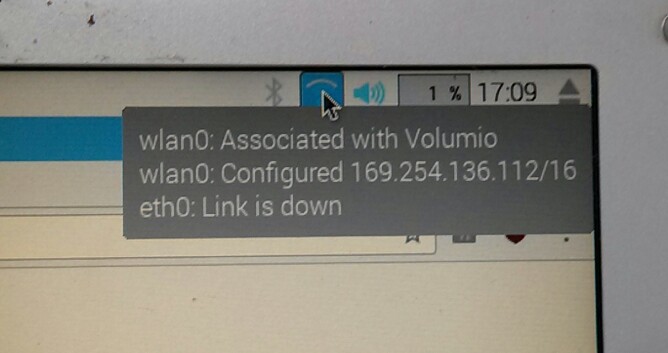

When I started I was amazed because my wireless worked and the lan connection. Later I disconnected the lan and the wlan kept in the air.

The logs can be found here: https://pablok.stackstorage.com/s/r7IudkrpD1qoPrM. These are the *.oud.txt files

Then I had to leave and I switched off everything.

On returning and restarting only the lan connection came alive. Even after several sudo ifdown wlan0, followed by sudo ifup wlan0 the wifi stayed dead.

Meanwhile it needs to be said that while booting I can see the network Volumio coming up on my laptop only to disappear again after a while.

So, only 1 file: issue0.txt

Hope you can help

I think you need to use ‘sudo iwconfig’

Thanks.

Can you turn OFF Hotspot mode (and save) in Volumio Network settings preference panel?

I guess you want it to connect as client to your home wifi.

Yes, it seems to be sudo iwconfig

BTW: you can scp the issue files to another machine in the network.

greetings,

pablo

The point is to have a solution if there is no network connection…plenty of other method if network is up indeed.

So is your problem solved?

Did you take care of turning OFF Hotspot as in previous message?

Did that while connected to the lan. Tried setting a fixed ip for the wlan but it would not let me set an ip, though an ip was shown. Wanted to connect it to one of my local wireless networks and then everything froze.

Now, neither the lan nor the wlan cards get a valid ip address. What is wise?

After several hard resets both the lan and the wlan came up. Now I could configure the wlan ip address. Everything seemed o.k. So I moved the raspi away from the screen and keyboard.

Booted and I had no ip for the wlan. Connected the network cable and it took its ‘old’ ip address, but it would not appear in a browser window. Did not accept a ssh connection either.

It still is problematic.

greetings,

pablo

You have to turn OFF Hotspot to set to wifi client mode.

Did switch off the Hotspot setting before trying to give out a fixed ip for the wlan.

Moved the raspi type 1 model B away to where it belongs. After first boot problems still existed. Pulled the wireless adapter and reconnected. Finally it took its ip address and I now have a working Volumio setup. The only fear I have is what happens when I power down tonight and start again tomorrow?

Thanks for all your help. It made a difference!

greetings,

pablo